UK anthropologists (and academics) may have spent their holidays poring over, gossiping about, ignoring, or otherwise relating to the release of the results of the 2008 research assessment exercise (RAE). Those of us outside the UK have probably heard of this gargantuan undertaking that aims to assess the quality of research conducted at university departments in view of better distributing funding. I think I first heard of the RAE as a prime example of the ‘audit culture‘ that in many places these days seems to be the guiding ethos of scholarship. Complaints about the RAE, and about audit in general, can be heard far and wide in universities across Europe and elsewhere. Audits often create bureaucracies that are expensive in their own right, they put onerous burdens on already over-worked teachers and scholars, they replace complex forms of assessment with simplified formulae in order to render research ‘legible’ (assessable) to bureaucrats, truly cutting-edge or paradigm-shifting research cannot be ‘seen’ in this setting, and so on. All of these criticisms are voiced by some at the Guardian (among other places). Other emerging complaints include ways that departments can ‘game‘ the system to produce a misleading result. My understanding of the basic procedure is that departments nominate research staff to submit four publications that are then assessed by a peer-lead panel, each publication being given a ranking (roughly, 4 = internationally important, 1 = unimportant anywhere). Departments apparently engage in a calculus of how many and which staff-members to include for assessment, in order to yield the highest result. They may decline to include staff who will not get a high score, or they may hire academic ‘stars’ on unusual contracts, in order to be able to include them. This article details some of the ways this gaming may have occurred in the 2008 exercise.

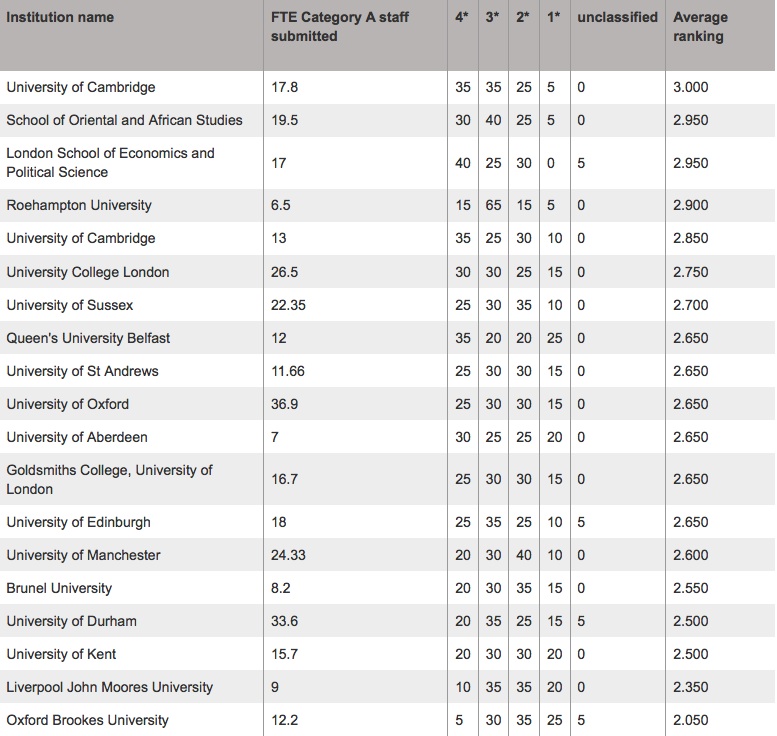

Below I append the 2008 results for ‘anthropology’ {Cambridge has two results, one for ‘social anthropology,’ the other for ‘biological anthropology’}:

Though I am personally deeply distrustful these sorts of rankings, feeling that that they utterly fail to capture the complex ways in which hierarchies of reputation (which I think are inseperable from putatively objective assessments of quality) are established, they are kind of amusing to talk about. While the results displayed above roughly comport with my sense of the UK social anthropology scene, one result stood out: the low ranking at Manchester. I find it rather shocking that a department with a historical reputation such as Manchester’s should not end up in (even) the top half of the schools being ranked. What’s up with that? Meanwhile, this particular ranking of UK departments to me points up the fact that a similar recent assessment of US departments is nowhere to be found (to my knowledge). The last results from the US National Research Council for anthropology were produced in, when?, 1995? Anyone care to take a stab at a (purely subjective) Top 10 list of US departments? What about a Worldwide Top 10?

I have not seen those 1995 rankings before and found some of them surprising.

If I were going to rank today I think that I would have to do one set for ‘general anthropology’ that encompassed multiple fields and approaches and included schools that turn out very well trained anthropologists in socio-cultural, archeology, biological and linguistic anthropology. Doing all four is hard these days and these four do them all well. That list would include (in no particular order): Michigan, Berkeley, Chicago, Stanford and Emory (yes, I know that is a surprise but they really turn out amazing students).

After that I would have to rank by sub field and would have to expand the scope to some non US institutions.

I spend a bit of time ranking the environmental anthropology programs for students because I have lots of fantastic undergraduates who apply to them.

I always include the following schools in that list (in no particular order): Berkeley, Stanford, Yale (IF and only if you are admitted to FES), Rutgers (if you do the joint program with Human Ecology), Michigan (if you do anthropology and SNR), Arizona, Washington, Duke, U of Kent at Canterbury, ANU.

But the rankings are always a little wonky because each student is different and would do better or worse in different programs.

I also worry, a bit, about the disciplinary obsession with particular elite schools because of the way that it affects hiring and the focus of other departments. If a school is biased towards graduates of program X and tends to hire people mostly from program X then their faculty does not have a varied and diverse set of perspectives because they were all trained by the same faculty at program X.

A general ranking, especially in a field as complicated and diverse as anthropology, is pretty difficult, if not downright silly. Moreover, one would want to differentiate a ranking of training programs vs. research programs.

But we all rank them informally all the time, right? One thing about any up-to-date ranking is that it would be out-of-date pretty quickly since the departments are almost all constantly changing, thanks to the “top” scholars who are more and more independent contractors out for a better deal somewhere else. Students and institutions suffer in this context, even as the schools think they are increasing their prestige by snatching top hires. So perennial favorites in anthropology like Chicago are I think threatened by the fact that faculty are apparently fleeing them.

All that said, I think it’s clear that Berkeley is blowing everyone else away right now, right across the board: Mahmood, Briggs, Rabinow, Scheper-Hughes, Ong,Caldeira/Holston, and others are really defining a big chunk of anthropological conversation. Michigan is but a dim shadow of the bright anthropological lights of Berkeley. Meanwhile, Chicago seems really to struggle to find a voice and identity. NYU is humming along impressively. Stanford’s recent reorganization needs to be given time to congeal into something (note departure of Gupta). UCSD has made very interesting decisions.

I think you’d want a few lists. Big programs (Berkeley, Chicago, Michigan, Penn, Emory, CUNY, et al) and small programs (Hopkins, New School, Rice). Where to situate someplace like Santa Cruz gets difficult.

As someone who just went through the process of applying to 9 programs across the country, this is a pretty interesting topic. For me, it wasn’t about finding the TOP anthropology program in the US, but finding a program that worked well with what I am trying to do.

I am not sure how programs can be ranked across the board in any meaningful way, especially since different universities have very different academic goals and philosophies.

However, if there was to be some kind of ranking system, it would have to be about more than big names. What about how well the program functions? Does the faculty work well together? Can grad students get through the program in a reasonable amount of time? Can they get funding? Can they get jobs when they get out, or do they go back to waiting tables?

Having a faculty full of big names is great and all, but there are other critical elements to an anthropology program, IMO.

It’s interesting to me that certain schools are always mentioned right off the bat as some of the best. Berkeley and Chicago come to mind. Are they mentioned because of their historic reputations, or because they actually have better programs?

grizzly: for the sake of argument, as a former new school student i would not put their program on a top anything list in its current incarnation. just mho, ymmv, etc etc. however, i do agree that smaller programs do need consideration.

in general for the sake of argument: when we talk about “top grad programs” what do we mean by ‘grad student’? phd? ma? both? i ask this because of the mention of ‘can students get funding?’ ma students rarely, if ever, get funding… but ma students are writing theses, are doing original research, etc. etc.

I took a quick look at Roehampton’s website and it says they do evolutionary anthropology and especially primatology. So, they very well might be highly regarded in this field (and that explains the contrast with the other schools on the list). As far as Manchester, I think its more just the other schools than Manchester specifically. Like the Americans that have responded haven’t said Columbia, despite its historical importance, etc.

Here in Oz, we’re about to suffer through a similar audit process because, being an insecure intellectual nation, we’re going to emulate the worst policies of all our larger, more august rivals. It’s not the ranking I dread, it’s all the damn paperwork that will inevitably result, the pre-audit planning meetings, etc.

What I’m struck by in the results is that, in reality, most of the group is clustered pretty tightly. Queen’s, St. Andrews, Aberdeen, Oxford, Goldsmith, and Edinburgh all ‘score’ a 2.650 (so much precision!). This, in spite of the fact that these are very different places, with very different strengths, profiles, grad experiences, etc. So all of this energy, time and money went into creating a ranking system that tells us these six anthropology departments are roughly comparable — something tells me the resources could have been better spent.

My opinion is that, if we’re going to go down the road of KPIs (key performance indicators, for those not forced to ingest regular quantities of this stuff), we should just get rid of the entire subjective evaluation dimension of this. Just multiply each publication by it’s citation rate, pick a multiplier for other basic factors, for example, (100 x rate of PhD completion as a decimal) + (number of copies of book x .01) + (number of reviews of book in academic journals x 3) + (number of keynote presentations x 2) + (number of times testifying to Congress/Parliament x 20) + … etc. If we’re going to have faux objectivity and quantitative measures, why not just go nuts with it, and totally remove the paperwork fatigue by making the indicators ungame-able and utterly objective, so that they take a couple of hours to put into a machine-readable bubble sheet. And then we could have individual scores as well as department scores, and we could see more clearly who’s pulling the department up and who’s dragging us down…

…not that I’m irritated by the audit culture or anything…

Thanks Greg. I think in fact the RAE in the UK is to be replaced by the ‘research excellence framework,’ which will be organized in a manner not dissimilar from what you describe here. People are however criticizing this move, suggesting that peer assessment must be kept central to future audits. See:

http://www.timeshighereducation.co.uk/story.asp?sectioncode=26&storycode=404926

As a member of staff at Manchester’s social anthro department I cant hide my persuasion 🙂 Just wanted to note that Manchester made a so-called ‘inclusive’ submission to the RAE: that is, we got all our members of staff (early career researchers and profs alike) to send their 4-piece submission. In this sense, we deliberately opted out of the ‘stars only’ approach favoured by some other institutions.

You may want to know that some league tables do take account of the inclusive dimension. They call it ‘research power’ and is a measure of combined volume and quality. The idea here is to note that a single brilliant person does not make as much impact as a big department with some brilliant and many good people doing lots of research. On this count (which, incidentally, is the one used by government to allocated funding), Manchester comes 4th.

Thanks Alberto for clarifying some of this. It seems that different strategies adopted by different departments yield different ‘spins’ as to the meaning of the results. Thus, Queen’s University in Belfast “notes”:http://www.qub.ac.uk/schools/SchoolofHistoryandAnthropology/NewsandEvents/

bq. The 2008 RAE results have confirmed Queen’s University’s reputation as a world leading centre for research in Anthropology. The exercise shows that 35% of Anthropology research at Queen’s is world leading (4*). Only two other Anthropology departments in the UK performed at this top level.

While the Department of Anthropology at Durham University “says”:http://www.dur.ac.uk/anthropology/research/rae2008/

bq. Durham ranked second in RAE 2008

bq. The Department of Anthropology has been ranked second in the UK for the volume of research rated as world-leading (4*) or internationally excellent (3*). Unlike most departments, in the Research Assessment Exercise 2008 we submitted over 95% of our staff to the RAE and these results are thus a clear indication of the high quality of our research at Durham. These results also confirm our earlier report based on citations, a metric likely to play a leading role in the new Research Excellence Framework. Because departments do not receive a single rating, but instead receive a profile of scores, there are several differing ‘league tables’ in circulation, each of which uses a different method of ranking universities by RAE results. A true picture of the research quality of a department can only be obtained when we factor in the number of researchers assessed. It is clear that at Durham our high level of world class productivity marks us out as one of the true ‘centres of excellence’ in Anthropology within the UK.

The Durham website links to “this table”:http://www.dur.ac.uk/resources/anthropology/events/DurhamrankedsecondinRAE2008.pdf which offers a different picture from the one published above (which I located in the Guardian).

Good, now that’s over with for another little while, perhaps the departments can get back to paying some attention to teaching.

No?

Having recently moved to the Netherlands, I can now add some information about the Dutch research assessment system to the picture. Here, you get only twice as many points for publishing an authored book than for an article (including a co-authored one) in a refereed journal. This system is obviously even more skewed in favour of natural scientists (and sociologists!) than the Australian one. Another curiosity that shows how un-universal these supposedly universal criteria are is that not only journals but also publishers are ranked, and apparently you get more points for publishing with Routledge and Oxford UP are ranked “A” than with the University of Washington Press (because it’s farther from Europe?)

It is a shame that poor Roehampton University, after doing so well without the symbolic support larger institutions enjoy, should be used as an example to confirm other peoples’ scepticism about the RAE. Of course the RAE is a problematic form of assessment, but is does tell us something – that an new institution can put itself high in the ranks through hard work and judicious management. Let us not forget that there are some very good scholars at that institution, and that maybe that a had a little to do with their recent success. It may teach us that we at the more established institutions should not rest on our laurels.